Information...

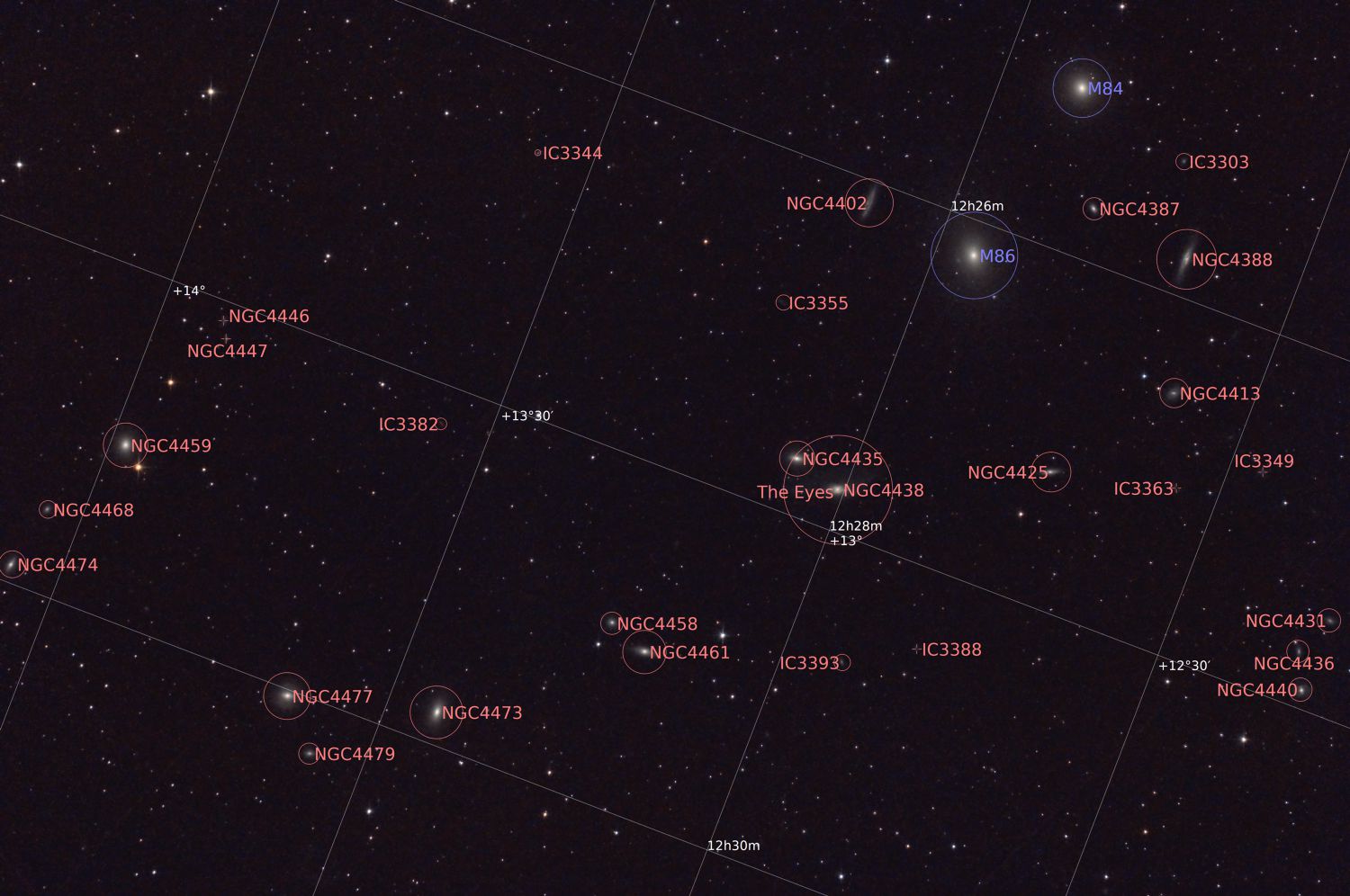

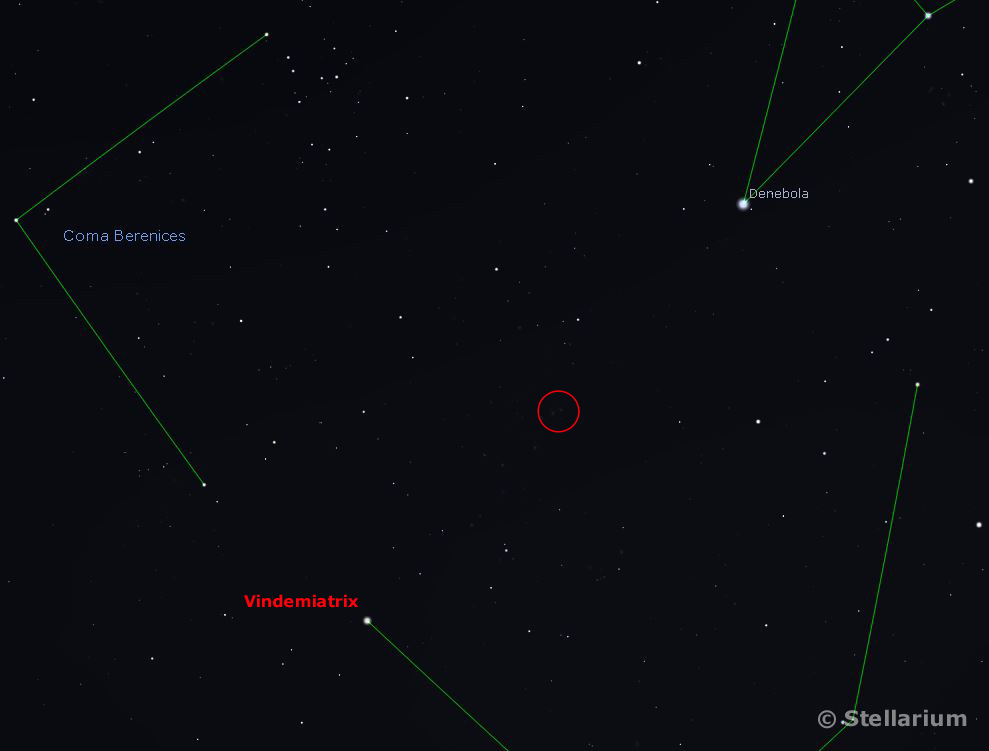

Markarian's Chain consists of a sequence of galaxies which form part of the Virgo Cluster. Two of the larger galaxies were discovered by Charles Messier in 1781 and added to his 'non-comet' catalogue as M84 and M86.

Also in James' widefield image are M87 & M88. For a close-up of these and numerous PGC galaxies see the Magnifier page.

The Virgo Cluster is a large cluster of galaxies centred about 54 million light years away from the Milky Way.

For more info. see the Wikipedia entry.